Fixed-scope roadmaps are irrelevant in tech products because they try to bring certainty and predictability into an environment where the reality suggests something else. Rather than denying uncertainty, we should embrace it and adjust our planning process to reflect the reality of a complex environment that tech products exist in. Using a build-measure-learn approach, if you are working in a complex environment then your plans will change as you uncover new insights, opportunities and value proposition in different iterations.

Often times, teams and organizations have adopted a way of working akin to the build-measure-learn cycle, popularized by Eric Ries, in The Lean Startup. However, they are still using fixed time/scope roadmaps that list the features that are expected to be completed at a given time. The same teams then run into problems when they uncover new insights during an iteration after they’ve measured the impact of certain features or done user research to understand the customer problem deeper. They have a dilemma – change the entire roadmap that was committed to at the beginning of the quarter or continue on with the previously committed features and ignore this new insight?

What if the product roadmap consisted of a list of questions or opportunities that need to be validated and tested by the teams to uncover hidden assumptions, rather than a list of features?

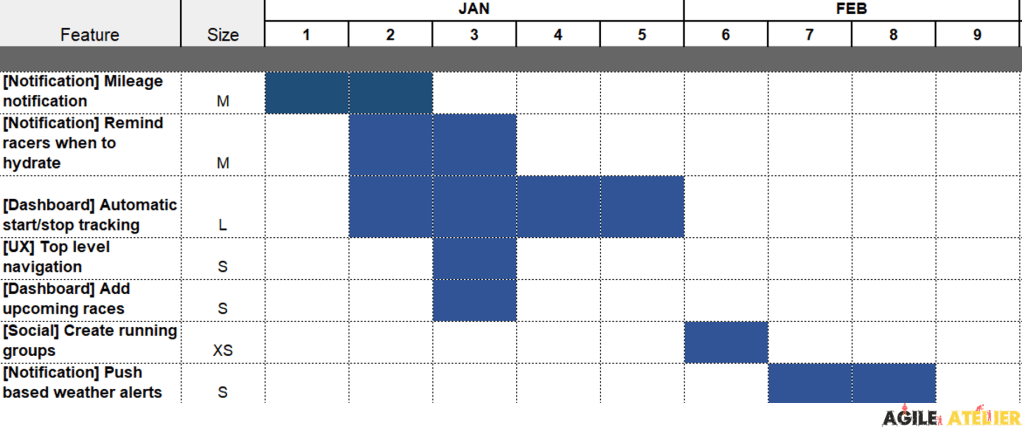

The image above shows a fixed time and scope roadmap for a team that’s building a running app to track users’ run times, races, and health stats. The development team estimates the size of the features and puts them on a roadmap. Looks nice and linear right? But what if you notice after creating the feature “Add upcoming races” that your reference users are less engaged with the app and not using either of those features? Would you continue building more socially-themed features such as “Create running groups”?

Let’s take the example of the roadmap above and make it more reflective of a build-measure-learn culture that the development team uses, by doing the following steps:

- For each of the features on the original roadmap, ask your team what sort of user problem they would like to solve for when this feature is successfully implemented. Write those user problems and behaviors down.

- Ask the team how they would measure that user behavior, or if they’ve solved a user problem.

- For the new set of features, write down the metrics or KPIs you’d like them to help move the needle on. After implementing those features/experiments, you can see if it had the desired effect on the KPIs to help you decide whether the experiment was successful or not.

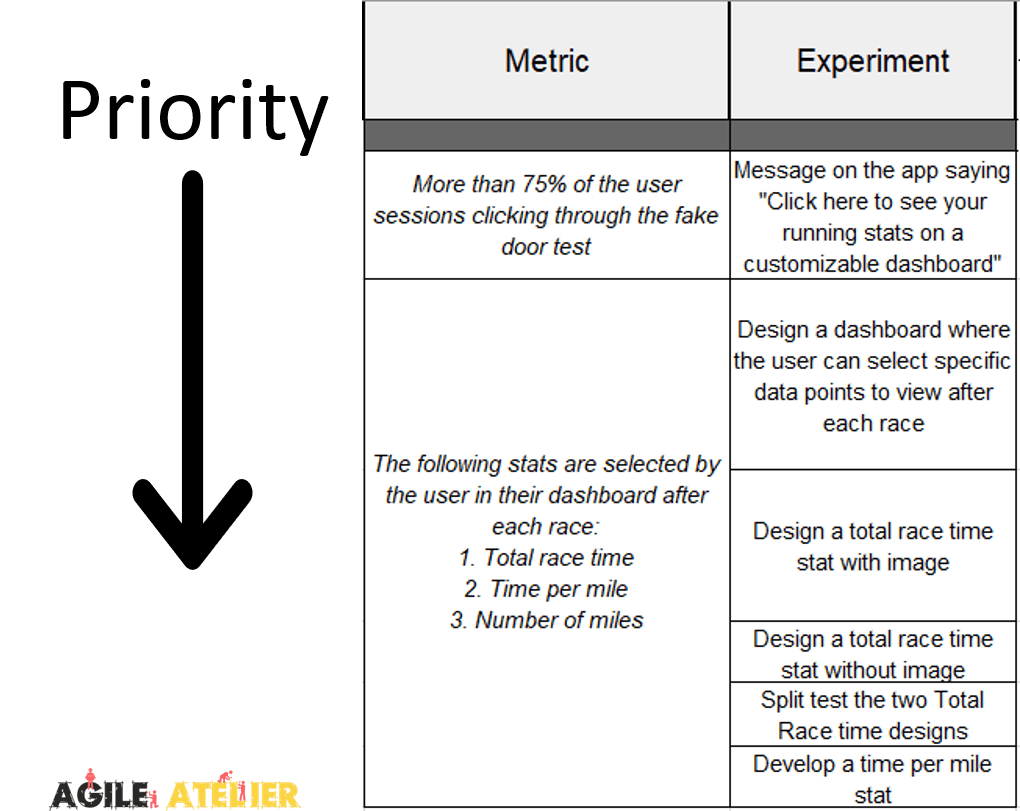

- In a problem-based roadmap (see image below), you’re committing to the desired user behaviors by validating and solving user problems, rather than the delivering features based on assumptions.

- Validate the user problem through qualitative (ie diary studies) and quantitative (ie statistical modeling) data with your reference customers.

- Ensure that the user problem fits into your business scope.

- Map the user problem, metric and features on the problem-based roadmap. Treat this as a living document and add/remove features and metrics from it based on your learnings.

Since roadmaps are predictive tools (with leading metrics) to help you prioritize and plan for the work that you will do, you want them to display the goals that you want to achieve while leaving the tactical features out of it. This is because there are potentially infinite solutions to the user problems. Once you’ve aligned on the problems that need to be solved, allow the teams to use their backlogs in defining the experiments that they see as useable/viable/feasible. The feasibility, especially, should be discussed at the backlog level for each potential solution, rather than at the roadmap level, where you are defining problems, each with potentially infinite solutions. Depending on a set of solutions that a team or organization decides to go with, they create their competitive edge in the industry.

In the image above, you want to focus on prioritizing the user problems that fit into your business needs as well as their related metrics, rather than trying to estimate exactly how long it would take. Even if you do somehow manage to accurately estimate how long it would take to conduct certain experiments, there is no added benefit. You should not move on to further features/experiments without allowing the learnings from certain experiments to impact what you’re going to do in subsequent experiments. Then, for the problem, build a potential solution with the least level of required fidelity to see if that solution helps you reach your metric. But if you don’t achieve your metric, how do you know whether to stop experiment or persist with further experiments? This is where the Additional Completion Criteria come in; this column gives more detail about when you should stop your experimentation should they fail to move the needle of the related metric(s). It should be filled in with different customers of yours, to create buy in from them. This creates further alignment for the team to know the best-case and worst-case impacts of their backlog experiments rather than continuing failed experiments into infinity. Below is what a sample backlog can look like – with experiments and epics to build based on the first two metrics that you want to impact, in this case. The experiments can then be broken down into more granular tasks with something like user story mapping.

One key pre-requisite for working with a problem-based roadmap is to have cross-functional teams that are empowered to solve customer problems that align with your business goals, first and foremost. The team should consist of people with the necessary skillsets to carry out different design/development/user research/testing/etc tasks that are relevant for your product and organization. This allows the team to minimize silos, minimize dependencies, and switch quickly between discovery and delivery tracks.

The main benefit of using something like a problem-based roadmap is that you focus on communicating the intent of what you’re trying to accomplish in the next iteration(s). This leads to alignment on the problem that your product teams would like to solve, while allowing for autonomy at the tactical level to try different experiments/features that would answer the problem/opportunities represented in your roadmap. A problem-based roadmap can also parallelize your efforts on conducting different experiments to see which feature would be more successful in achieving the desired user behavior. Additionally, framing the problem you’d like to solve or assumptions that you’d like to test, first encourages you to constantly think about the job that your product does for your customers, and use a JTBD-like approach to product management.

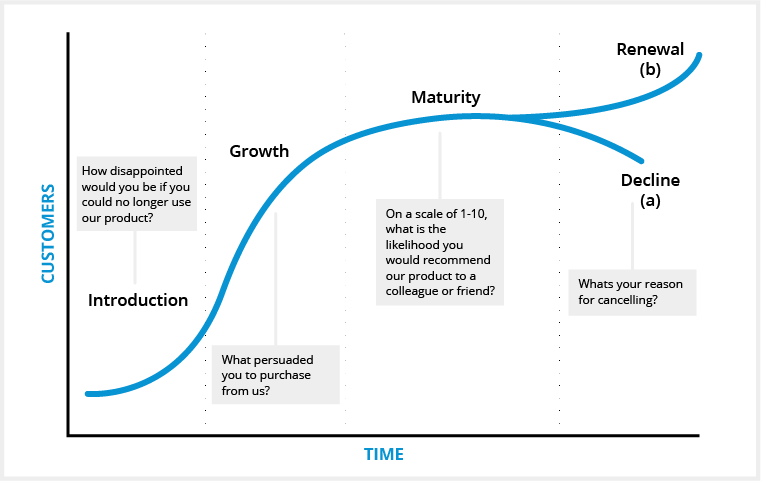

Using a problem-based roadmap is not only for teams that are working on products that are in the early development and growth stages of a product. Teams that are working on products/services that are in the mature/declining stages of the lifecycle can benefit from this approach by validating assumed customer behaviors and measuring the feasibility/usability/value of certain features. Approaching planning for your upcoming work in this manner can help spot market trends and shift in consumer preferences. In the example of a product past its maturity stage, if you invalidate a user behavior of your product (with qualitative and statistically significant quantitative methods) you dig deeper into why the user problem that allowed for mass adoption of your product is changing. This information could allow you to pivot your product’s business value out of decline and into a new introductory phase.

Prior to committing to certain user problems you’d like to solve, you want to see how the problems that you’re placing on the roadmap compare to the business objectives with the help of something like the Dual Track Single Flow model. Because, not all user problems may be worth solving, nor all solutions worth pursuing, for your business goals.

3 thoughts on “Problem-based roadmaps”